Wikipedia Summary

David Leadbeater

<dgl@dgl.cx>

Wikipedia

- Wikipedia is great

- Most of you have probably edited it

- Wonderful source of information

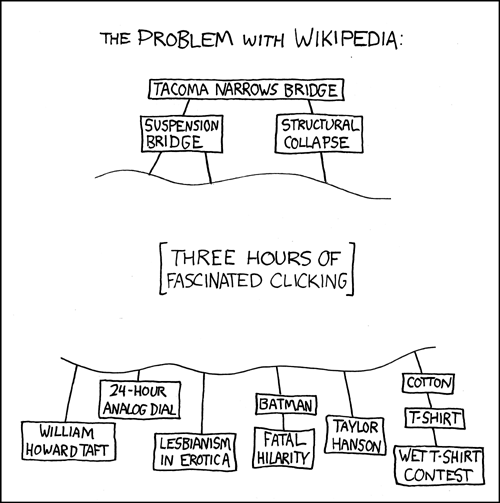

- Sometimes a little too detailed

(From xkcd, CC BY-NC.)

A slight time sink

Simple idea

- Generate summaries of Wikipedia articles

- Google already sort of do this, by crawling wikipedia and extracting the text

- Not really something I can (or want to do), Google's data isn't freely available

- Wikipedia already offer "abstracts for Yahoo", but the quality is fairly low (or was when I looked at this orignally).

How?

- Wikipedia offer XML data dumps

- Parse::MediaWikiDump parses these

- I wrote Text::Summary::MediaWiki

- Store it all in an SQLite database

Works best if page conforms to Lead section

guidelines, but most do.

Wikipedia is quite big, the database is around 2GB now, this is partly due to the FTS index.

Querying

- I had an idea.. make the summaries available over DNS

- Not quite as mad as it sounds

- TXT records work very well for this, caching is free

- Easy to query from command line, Perl, etc

- Demo

But soon..

- Not all applications can query TXT records, HTTP would be useful too

- Wrote a JSON/JSON-P interface

HTTP interface

- GET http://js-wp.dg.cx/json/Perl

- {"text":"In computer programming, Perl is a high-level, general-purpose, interpreted, dynamic programming language. Perl was originally developed by Larry Wall, a linguist working as a systems administrator for NASA, in 1987, as a general purpose Unix scripting language to make report processing easier.Since then, it has undergone many changes and revisions and became widely popular...","url":"http://en.wikipedia.org/wiki/Perl","name":"Perl","id":""}

Greasemonkey

- Add a title to any link to (or within) Wikipedia

- Uses the HTTP interface and a background HTTP request

Demo.

Script is at https://dgl.cx/2006/09/wikipedia-summary.user.js.

Currently it doesn't do anything clever, so if the title isn't retrieved within the title display timeout (200ms), it won't be displayed.

Script is at https://dgl.cx/2006/09/wikipedia-summary.user.js.

Currently it doesn't do anything clever, so if the title isn't retrieved within the title display timeout (200ms), it won't be displayed.

To Do

- Parsing MediaWiki markup is hard

- Use Wikipedia API to get more up-to-date data

- Work out a way to expose the FTS index (just searches on summaries)

- Release all the code

- Templates hard to get right.

- Dumps aren't very frequent.

- Only done for english.

Questions?

- Thank you

- https://dgl.cx/wikipedia-dns